Developer Guide¶

This guide covers local development, testing, and contributing to the SPOT Platform.

Not a deployment guide

The clone-and-build workflow described here is for contributing to the platform. It builds images from your working tree and is not suitable for production, staging, or any environment that is not a pure development sandbox. Operators must follow the Deployment guide instead.

Prerequisites¶

- Docker and Docker Compose

- GNU Make

- Git

The dev loop is driven entirely by make targets that wrap docker compose. The operator-facing spot-cli (auth, plugin, workflow, analyze, config, benchmark) is a separate tool and is not needed to develop the platform.

Getting Started¶

Initial Setup¶

# Clone the platform repository

git clone https://codeberg.org/SPOT_Project/core.git

cd core

# List available targets

make help

# Start the platform

make service:start

Development Environment¶

Start the development environment with hot reload:

# Set development environment in .env

echo "APP_ENV=dev" > .env

# Start all services

make service:start

# Or use a temporary override

APP_ENV=dev make service:start

Development mode includes:

- Hot reload (code changes auto-restart services)

- Read-write volume mounts

- Debug tools (Adminer, MailHog)

- Detailed logging

- Development dependencies installed

Core Commands¶

# Platform management

make service:start # Start platform

make service:stop # Stop all services

make service:restart # Restart services

make service:status # Show service status

# Development tools

make dev:shell SERVICE=api-gateway # Shell into a service container

make dev:logs # View logs (follow mode)

make dev:logs SERVICE=api-gateway # Service-specific logs

# Code quality

make quality:lint # Run linting

make quality:format # Format code

make quality:type-check # Run type checking

Development Workflow¶

Making Changes¶

- Create feature branch:

- Start development environment:

-

Make code changes ; services auto-reload.

-

Run tests:

- Run quality checks:

Working with Services¶

Access a service shell:

View service logs:

Restart a single service after a config change:

Testing¶

Quick Start¶

# Test specific service

make test:unit SERVICE=api-gateway

# Test all services

make test:unit

# Integration tests

make test:integration

# Unit + integration

make test

# Unit tests with coverage gate (--cov-fail-under=100)

make test:coverage

Test Structure¶

Each service has its own tests:

services/{service}/

├── tests/

│ ├── conftest.py # Service fixtures

│ └── test_*.py # Unit tests

├── src/ # Source code

└── pyproject.toml # Test configuration

Platform-level tests:

tests/

├── conftest.py # Global configuration

├── fixtures/ # Shared fixtures

├── integration/ # Cross-service tests

└── performance/ # Load tests

Writing Tests¶

Example unit test:

# tests/test_analyzer.py

import pytest

from src.analyzer import analyze_email

def test_analyze_legitimate_email():

email = {

"headers": {

"sender": "noreply@github.com",

"subject": "Security alert"

},

"body_text": "New sign-in detected"

}

result = analyze_email(email)

assert result.is_phishing is False

assert result.threat_level == "none"

Example integration test:

# tests/integration/test_email_analysis.py

import pytest

import requests

def test_full_email_analysis(api_client, test_email):

# Submit email

response = api_client.post(

"/api/v1/analyze",

json={"email": test_email}

)

assert response.status_code == 200

job_id = response.json()["job_id"]

# Check status

status_response = api_client.get(f"/api/v1/analyze/{job_id}")

assert status_response.json()["status"] == "completed"

Test Configuration¶

Tests use pytest with configuration in pyproject.toml:

[tool.pytest.ini_options]

minversion = "7.0"

addopts = [

"--strict-markers",

"--cov-report=term-missing",

"--cov-report=html",

"--cov=.",

]

testpaths = ["tests"]

Coverage¶

Generate the coverage report:

# Run tests with coverage (gates at 100% per service)

make test:coverage

# View HTML report

open services/api-gateway/htmlcov/index.html

Coverage files:

- HTML:

services/{service}/htmlcov/index.html - XML:

services/{service}/coverage.xml - Terminal: shown after the run

Test Environments¶

The test targets set APP_ENV=test automatically:

Test environment includes:

- Separate database (

spot_test) - Separate Redis database (index 10)

- Separate RabbitMQ vhost (

spot_test) - Mock analyzers

- Reduced log verbosity

Code Quality¶

Formatting¶

# Format all services

make quality:format

# Format a specific service

make quality:format SERVICE=api-gateway

# Check formatting without modifying

make quality:format-check

Uses Ruff for Python formatting.

Linting¶

# Lint all services

make quality:lint

# Lint a specific service

make quality:lint SERVICE=api-gateway

Uses Ruff for linting.

Type Checking¶

# Type-check all services

make quality:type-check

# Type-check a specific service

make quality:type-check SERVICE=api-gateway

Uses mypy for type checking.

Project Structure¶

core/

├── services/

│ ├── api-gateway/

│ │ ├── src/ # Source code

│ │ ├── tests/ # Unit tests

│ │ ├── Dockerfile # Service image

│ │ └── pyproject.toml # Dependencies

│ ├── analyzer-orchestrator/

│ ├── mail-orchestrator/

│ ├── knowledge/

│ └── cli/ # Operator CLI

├── shared/ # Shared code

│ ├── config/

│ └── utils/

├── tests/ # Platform tests

│ ├── integration/

│ └── performance/

├── docker-compose.yml # Base compose

├── docker-compose.dev.yml # Dev overrides

├── docker-compose.prod.yml # Prod overrides

├── docker-compose.test.yml # Test overrides

└── Makefile # Dev-loop targets

Building and Docker¶

Build Images¶

# Build all services

make docker:build

# Build a specific service

make docker:build SERVICE=api-gateway

# Build the shared base image

make docker:build-base

Docker Compose¶

# Start services

make service:start

# View logs

make dev:logs

# Stop services

make service:stop

# Clean up caches and dangling artefacts

make dev:clean

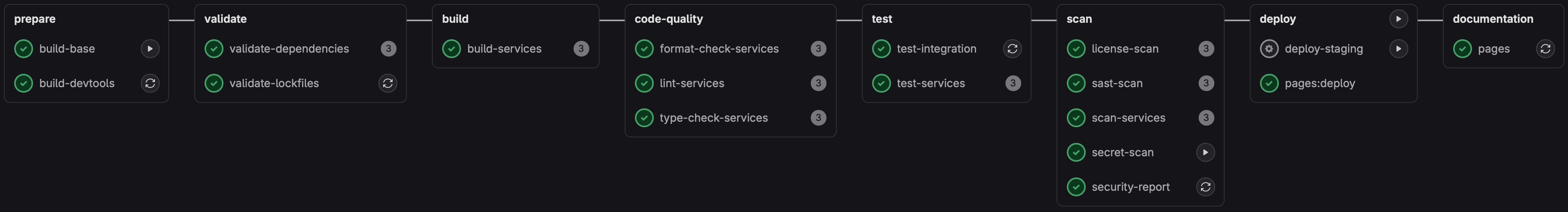

CI/CD¶

Local CI Testing¶

Run GitLab CI locally:

# Run the default pipeline

make ci:local

# Run a specific job

make ci:local JOB=test-unit

# Run the full pipeline including manual jobs

make ci:local FULL=1

See CI/CD for details.

GitLab CI¶

Pipeline runs automatically on push:

- Validate ; lint, format check, type check.

- Build ; build Docker images.

- Test ; unit and integration tests.

- Deploy ; push images to the registry.

Environment Management¶

SPOT uses APP_ENV to control the environment:

prod; production (default).dev; development with hot reload.test; testing (set automatically by the test targets).

Switch environments:

Or use a temporary override:

See Environment Configuration for details.

Contributing¶

Workflow¶

- Fork the repository.

- Create a feature branch.

- Make changes with tests.

- Run quality checks:

make quality:lint && make quality:format-check. - Run tests:

make test. - Commit with a clear message.

- Push and create a merge request.

Commit Messages¶

Use conventional commits:

feat: Add support for attachment analysis

fix: Resolve authentication timeout issue

docs: Update API documentation

test: Add integration tests for workflows

refactor: Simplify configuration loading

Code Style¶

- Follow PEP 8.

- Use type hints.

- Write docstrings for public APIs.

- Keep functions small and focused.

- Add tests for new features.

Pull Request Process¶

- Ensure all tests pass.

- Update documentation.

- Add a changelog entry.

- Request review.

- Address feedback.

- Merge after approval.

Debugging¶

Debug Mode¶

Enable debug logging:

Interactive Debugging¶

Use the Python debugger:

Or use an IDE debugger (VS Code, PyCharm).

Common Issues¶

Service won't start:

# Check logs

make dev:logs SERVICE=api-gateway

# Check status

make service:status

# Restart service

make service:restart

Tests failing:

# Ensure test environment

grep APP_ENV .env # Should be test during tests

# Clean test data

docker compose down -v

make test:integration

Hot reload not working:

# Ensure dev environment

grep APP_ENV .env # Should be dev

# Check volumes are mounted

docker compose config | grep volumes

Additional Resources¶

- API Reference ; API documentation.

- Environment Configuration ; environment variables.

- Configuration ; configuration options.

- Architecture ; system architecture.